When a user taps a deep link, where should they land? The product page? A campaign-specific landing screen? The home feed with the product highlighted? Each destination produces different conversion rates, and the only way to know which works best is to test. A/B testing deep link destinations lets you split traffic between different in-app screens and measure which one drives the most purchases, signups, or engagement.

For CTA testing, see CTA A/B Testing for App Install and Deep Links. For conversion funnel analysis, see Conversion Funnel Analysis for Deep Links.

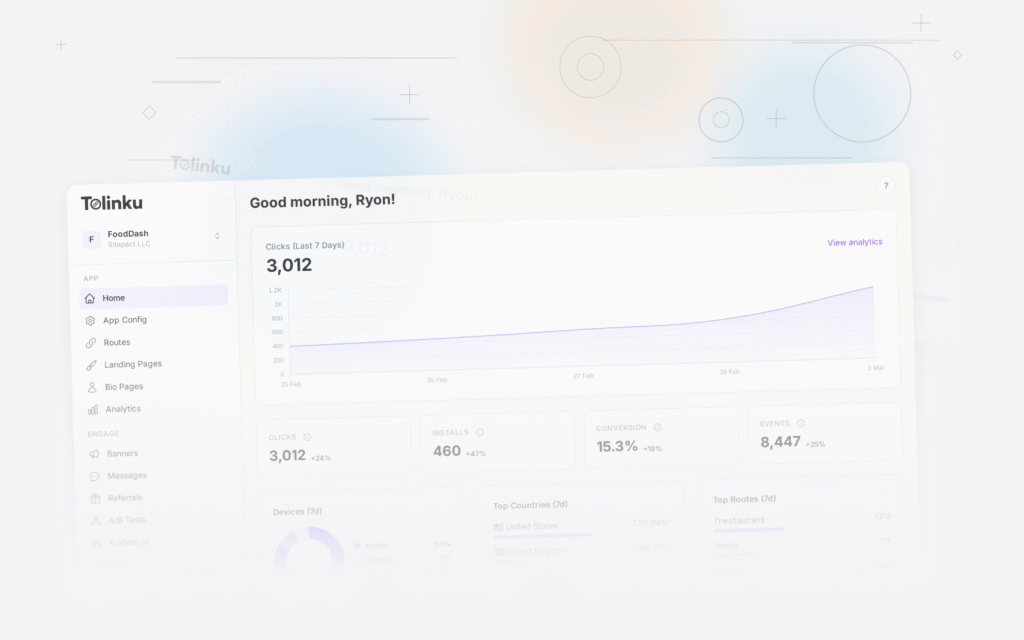

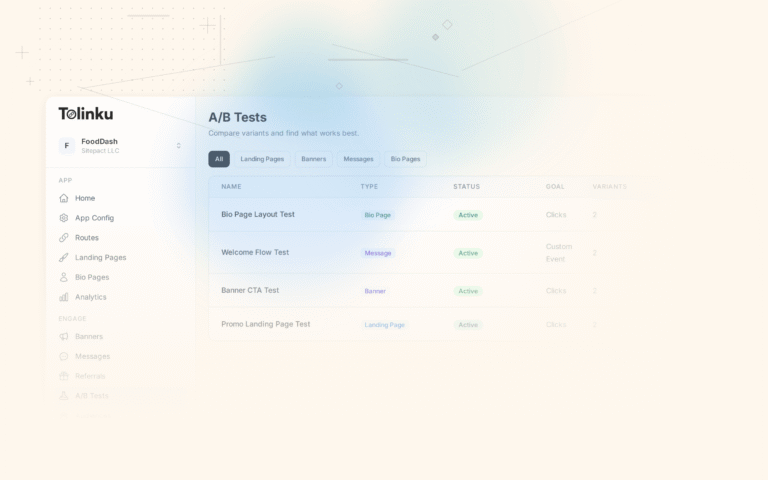

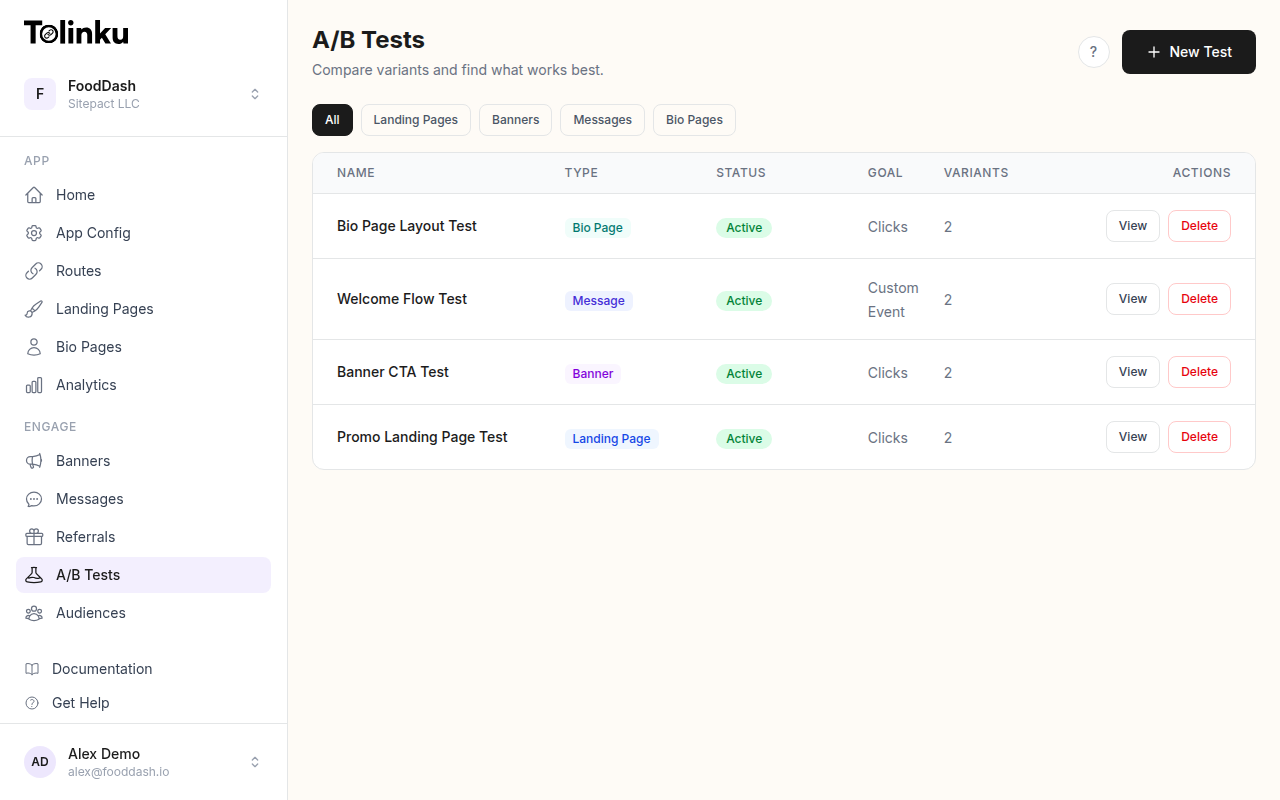

The A/B tests list page showing test names, status, types, and variant counts.

The A/B tests list page showing test names, status, types, and variant counts.

What to Test

Common Destination Comparisons

| Test | Variant A | Variant B | Primary Metric |

|---|---|---|---|

| Product link | Product detail page | Product in collection view | Purchase rate |

| Campaign link | Campaign landing page | Category page with banner | Conversion rate |

| Referral link | Referral welcome screen | Home feed with referral banner | Signup completion |

| Content share | Full article view | Article preview + signup prompt | Account creation |

| Re-engagement | Last viewed screen | Home with "what's new" | Session depth |

| Onboarding | Feature tour first | Content first | D7 retention |

When Destination Testing Matters

Destination testing has the highest impact when:

- High traffic links: Ad campaigns, email blasts, popular shared content

- High-value actions: Purchase flows, subscription prompts, account creation

- New user flows: Deferred deep links where first impressions are critical

For low-traffic links (< 100 clicks/week), destination testing takes too long to reach significance.

Implementation

Route-Level A/B Testing

Implement testing at the deep link routing layer:

function routeDeepLink(url, userId) {

const path = new URL(url).pathname;

const params = Object.fromEntries(new URL(url).searchParams);

// Check for active experiments on this route

const experiment = getActiveExperiment(path);

if (experiment) {

const variant = assignVariant(userId, experiment.id);

const destination = variant.destination;

analytics.track('experiment_impression', {

experimentId: experiment.id,

variantId: variant.id,

originalPath: path,

actualDestination: destination,

});

return navigateTo(destination, params);

}

// No experiment, use default routing

return navigateTo(path, params);

}

Experiment Configuration

const destinationExperiments = {

product_destination_v2: {

id: 'product_destination_v2',

routePattern: '/product/:id',

variants: [

{

id: 'product_page',

weight: 50,

destination: '/product/:id', // Direct product page

},

{

id: 'product_in_collection',

weight: 50,

destination: '/collection/:category?highlight=:id', // Product visible in collection

},

],

primaryMetric: 'purchase',

secondaryMetrics: ['add_to_cart', 'time_on_screen', 'bounce_rate'],

minSampleSize: 2000,

},

campaign_destination_v1: {

id: 'campaign_destination_v1',

routePattern: '/campaign/:slug',

variants: [

{

id: 'landing_page',

weight: 50,

destination: '/campaign/:slug/landing', // Custom landing page

},

{

id: 'category_with_banner',

weight: 50,

destination: '/category/:category?banner=:slug', // Category + promotional banner

},

],

primaryMetric: 'conversion',

secondaryMetrics: ['engagement_time', 'items_viewed'],

minSampleSize: 1500,

},

};

Variant Assignment

Use a deterministic hash for consistent assignment:

function assignVariant(userId, experimentId) {

// Check for existing assignment

const existing = storage.get(`experiment_${experimentId}_${userId}`);

if (existing) return existing;

// Deterministic assignment based on user ID

const hash = djb2Hash(`${userId}-${experimentId}`);

const experiment = destinationExperiments[experimentId];

const totalWeight = experiment.variants.reduce((sum, v) => sum + v.weight, 0);

let bucket = hash % totalWeight;

for (const variant of experiment.variants) {

bucket -= variant.weight;

if (bucket < 0) {

storage.set(`experiment_${experimentId}_${userId}`, variant);

return variant;

}

}

return experiment.variants[0]; // Fallback

}

function djb2Hash(str) {

let hash = 5381;

for (let i = 0; i < str.length; i++) {

hash = ((hash << 5) + hash) + str.charCodeAt(i);

}

return Math.abs(hash);

}

Tracking and Analysis

Event Tracking

Track the complete funnel for each variant:

// Impression (user saw the destination)

analytics.track('destination_viewed', {

experimentId: 'product_destination_v2',

variantId: 'product_page',

productId: 'prod_123',

source: 'ad_campaign',

});

// Engagement (user interacted)

analytics.track('destination_engaged', {

experimentId: 'product_destination_v2',

variantId: 'product_page',

action: 'add_to_cart',

productId: 'prod_123',

});

// Conversion (user completed the goal)

analytics.track('destination_converted', {

experimentId: 'product_destination_v2',

variantId: 'product_page',

conversionType: 'purchase',

revenue: 42.50,

});

Results Analysis

async function analyzeExperiment(experimentId) {

const experiment = destinationExperiments[experimentId];

for (const variant of experiment.variants) {

const impressions = await countEvents('destination_viewed', {

experimentId, variantId: variant.id,

});

const engagements = await countEvents('destination_engaged', {

experimentId, variantId: variant.id,

});

const conversions = await countEvents('destination_converted', {

experimentId, variantId: variant.id,

});

const revenue = await sumRevenue('destination_converted', {

experimentId, variantId: variant.id,

});

console.log(variant.id, {

impressions,

engagementRate: (engagements / impressions * 100).toFixed(2) + '%',

conversionRate: (conversions / impressions * 100).toFixed(2) + '%',

revenuePerImpression: (revenue / impressions).toFixed(2),

avgOrderValue: conversions > 0 ? (revenue / conversions).toFixed(2) : 'N/A',

});

}

}

Statistical Significance

function isSignificant(controlConversions, controlTotal, treatmentConversions, treatmentTotal) {

const p1 = controlConversions / controlTotal;

const p2 = treatmentConversions / treatmentTotal;

const pPooled = (controlConversions + treatmentConversions) / (controlTotal + treatmentTotal);

const se = Math.sqrt(pPooled * (1 - pPooled) * (1 / controlTotal + 1 / treatmentTotal));

const z = (p2 - p1) / se;

// z > 1.96 means p < 0.05 (95% confidence)

return {

zScore: z.toFixed(3),

significant: Math.abs(z) > 1.96,

lift: ((p2 - p1) / p1 * 100).toFixed(1) + '%',

confidence: Math.abs(z) > 2.58 ? '99%' : Math.abs(z) > 1.96 ? '95%' : 'Not significant',

};

}

Common Test Patterns

Pattern 1: Product Page vs. Collection View

When a user clicks a product ad, do they convert better landing on the product detail page or seeing the product in a browsable collection?

Typical result: Product detail pages win for high-intent sources (search ads, email). Collection views win for discovery sources (social ads, content shares).

Pattern 2: Custom Landing vs. Existing Screen

Should you build a custom campaign landing page or route to an existing app screen?

Typical result: Custom landing pages win for conversion rate but cost more to build and maintain. Existing screens win for ROI when the uplift doesn't justify the build cost.

Pattern 3: Auth-First vs. Content-First

For new users arriving via deep links, should they sign up first or see content first?

Typical result: Content-first wins by 20-40% for signup completion because users see value before committing. Auth-first wins for time-sensitive actions (limited offers, expiring content).

Best Practices

1. Test One Variable at a Time

Only change the destination, not the copy, design, or pricing. If you change multiple things, you can't attribute the result.

2. Run for Minimum 2 Weeks

Day-of-week effects are real. Weekend traffic behaves differently from weekday traffic. Run every test for at least 14 days.

3. Segment Your Results

The winning variant might differ by source:

async function analyzeBySource(experimentId) {

const sources = ['ad', 'email', 'social', 'referral', 'organic'];

for (const source of sources) {

const results = await getExperimentResults(experimentId, { source });

console.log(source, results);

}

}

4. Track Long-Term Impact

A variant that wins on immediate conversion might lose on D30 retention. Track downstream metrics for at least 30 days before declaring a permanent winner.

For A/B testing features, see Tolinku A/B testing. For test setup, see the A/B testing documentation.

Get deep linking tips in your inbox

One email per week. No spam.